Lightweight LLM Powers Japanese Enterprise AI Deployments

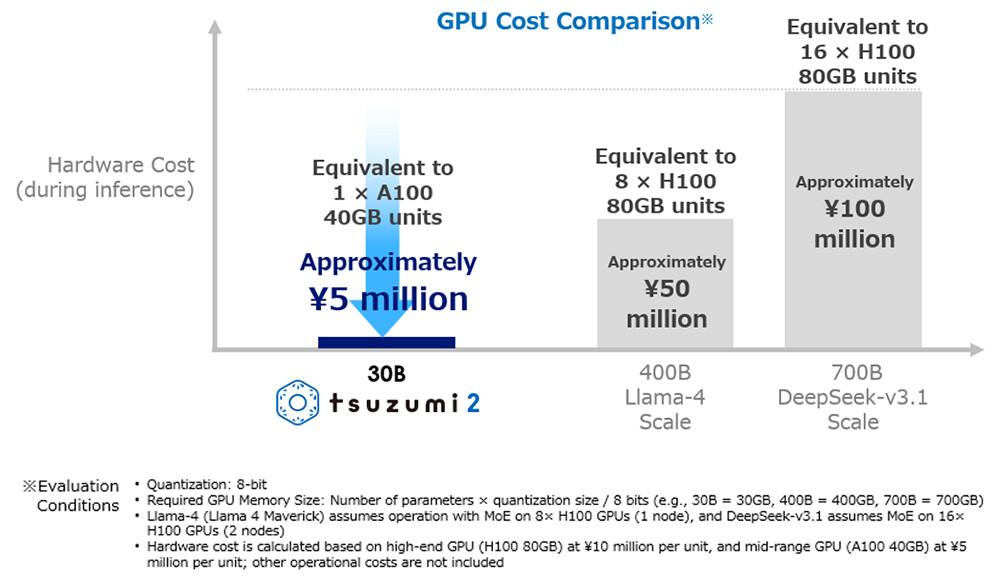

Enterprise AI deployment often faces a key challenge: organisations require advanced language models but hesitate due to the high infrastructure costs and energy consumption associated with cutting-edge systems. NTT’s recent introduction of tsuzumi 2, a lightweight large language model (LLM) that operates on a single GPU, offers a solution to this problem. Early deployments of tsuzumi 2 demonstrate performance comparable to larger models while significantly reducing operational expenses.

The business rationale behind this innovation is clear. Conventional large language models demand dozens or even hundreds of GPUs, resulting in substantial electricity use and high operational costs. These factors create barriers that make AI deployment impractical for many organisations, especially those with limited budgets or power infrastructure constraints.

How Lightweight LLM Powers Japanese Enterprises Efficiently

For enterprises in markets with limited power resources or strict budget controls, the demands of traditional AI systems often exclude AI as a viable option. NTT’s press release highlights these practical challenges through the example of Tokyo Online University. The university maintains an on-premise platform to keep student and staff data within its campus network, fulfilling data sovereignty requirements common in educational and regulated sectors.

After confirming that tsuzumi 2 can handle complex context understanding and long-document processing at production-ready levels, Tokyo Online University deployed the model to enhance course Q&A, support teaching material creation, and provide personalised student guidance. Operating on a single GPU allows the university to avoid capital expenditures on GPU clusters and reduce ongoing electricity costs. More importantly, deploying the model on-premises addresses data privacy concerns that often prevent educational institutions from using cloud-based AI services that process sensitive student data.

NTT’s internal tests in financial inquiry handling further illustrate the model’s efficiency. Tsuzumi 2 matches or surpasses leading external models despite requiring far less infrastructure. This high performance-to-resource ratio is crucial for enterprises where total cost of ownership influences AI adoption decisions. The model achieves what NTT describes as “world-top results among models of comparable size” in Japanese language tasks, excelling in business domains that prioritize knowledge, analysis, instruction-following, and safety.

Optimised specifically for Japanese language processing, tsuzumi 2 reduces the need for larger multilingual models that demand significantly more computational power. Reinforced knowledge in sectors such as finance, medicine, and public services—developed based on customer needs—enables domain-specific deployments without extensive fine-tuning. Additionally, the model’s Retrieval-Augmented Generation (RAG) and fine-tuning features facilitate the efficient creation of specialised applications for enterprises with proprietary knowledge bases or industry-specific terminology, where generic models often fall short.

Data Sovereignty and Multimodal Capabilities Drive Adoption

Beyond cost savings, data sovereignty is a major factor driving the adoption of lightweight LLMs in regulated industries. Organisations managing confidential information face risks when processing data through external AI services governed by foreign jurisdictions. NTT positions tsuzumi 2 as a “purely domestic model” developed entirely in Japan, designed to operate on-premises or in private clouds. This approach addresses concerns common in Asia-Pacific markets regarding data residency, regulatory compliance, and information security.

An example of this strategy in practice is the partnership between FUJIFILM Business Innovation and NTT DOCOMO BUSINESS. FUJIFILM’s REiLI technology transforms unstructured corporate data—such as contracts, proposals, and mixed text and images—into structured information. By integrating tsuzumi 2’s generative capabilities, the solution enables advanced document analysis without sending sensitive corporate data to external AI providers. This combination of lightweight models with on-premise data processing offers a practical enterprise AI approach that balances capability, security, compliance, and cost.

Tsuzumi 2 also features built-in multimodal support, capable of handling text, images, and voice within enterprise applications. This is important for business workflows that require AI to process multiple data types without deploying separate specialised models. Industries such as manufacturing quality control, customer service, and document processing often involve mixed inputs. Using a single model to manage all three data types reduces integration complexity compared to managing multiple specialised systems with different operational needs.

Market Context and Considerations for Deployment

NTT’s lightweight LLM approach contrasts with hyperscaler strategies that focus on massive models with broad capabilities. While frontier models from companies like OpenAI, Anthropic, and Google offer cutting-edge performance for enterprises with large AI budgets and advanced technical teams, this approach excludes many organisations. This is especially true in Asia-Pacific regions where infrastructure quality varies widely.

Factors such as power reliability, internet connectivity, data centre availability, and regulatory frameworks differ significantly across markets. Lightweight models that enable on-premise deployment better accommodate these variations than solutions dependent on consistent cloud infrastructure access.

Enterprises considering lightweight LLM deployment should evaluate several factors. Tsuzumi 2’s reinforced knowledge in financial, medical, and public sectors suits specific domains, but organisations in other industries need to assess whether the available domain knowledge meets their needs. The model’s optimisation for Japanese language processing benefits operations focused on the Japanese market but may not be ideal for multilingual enterprises requiring consistent performance across languages.

On-premise deployment demands internal technical capabilities for installation, maintenance, and updates. Organisations lacking these resources might find cloud-based alternatives simpler to operate despite higher costs. While tsuzumi 2 matches larger models in many domains, frontier models may outperform in niche or novel applications. Enterprises should weigh whether domain-specific performance is sufficient or if broader capabilities justify the additional infrastructure investment.

NTT’s tsuzumi 2 demonstrates that sophisticated AI implementation does not necessarily require hyperscale infrastructure. For organisations whose needs align with lightweight model capabilities, early deployments show tangible business benefits: lower operational costs, enhanced data sovereignty, and production-ready performance in targeted domains.

As enterprises continue to adopt AI, the balance between capability requirements and operational constraints is driving demand for efficient, specialised solutions. The question for organisations is not whether lightweight models are “better” than frontier systems, but whether they meet specific business needs while addressing cost, security, and operational limitations that make other approaches impractical. The experiences of Tokyo Online University and FUJIFILM Business Innovation suggest that the answer is increasingly affirmative.

For more stories on this topic, visit our category page.

Source: original article.