CEO of Roblox Says Child Predators on the Platform Are an “Opportunity”

Roblox, the popular children’s gaming platform with over 200 million daily users at its peak, faces serious challenges beyond entertainment. Since its launch in 2006, the platform has become a hotspot for harmful activities, including online casinos run by racketeers targeting underage users and pedophiles seeking victims. These issues have brought Roblox under intense scrutiny, especially as 20 federal lawsuits highlight concerns about children’s safety on the platform.

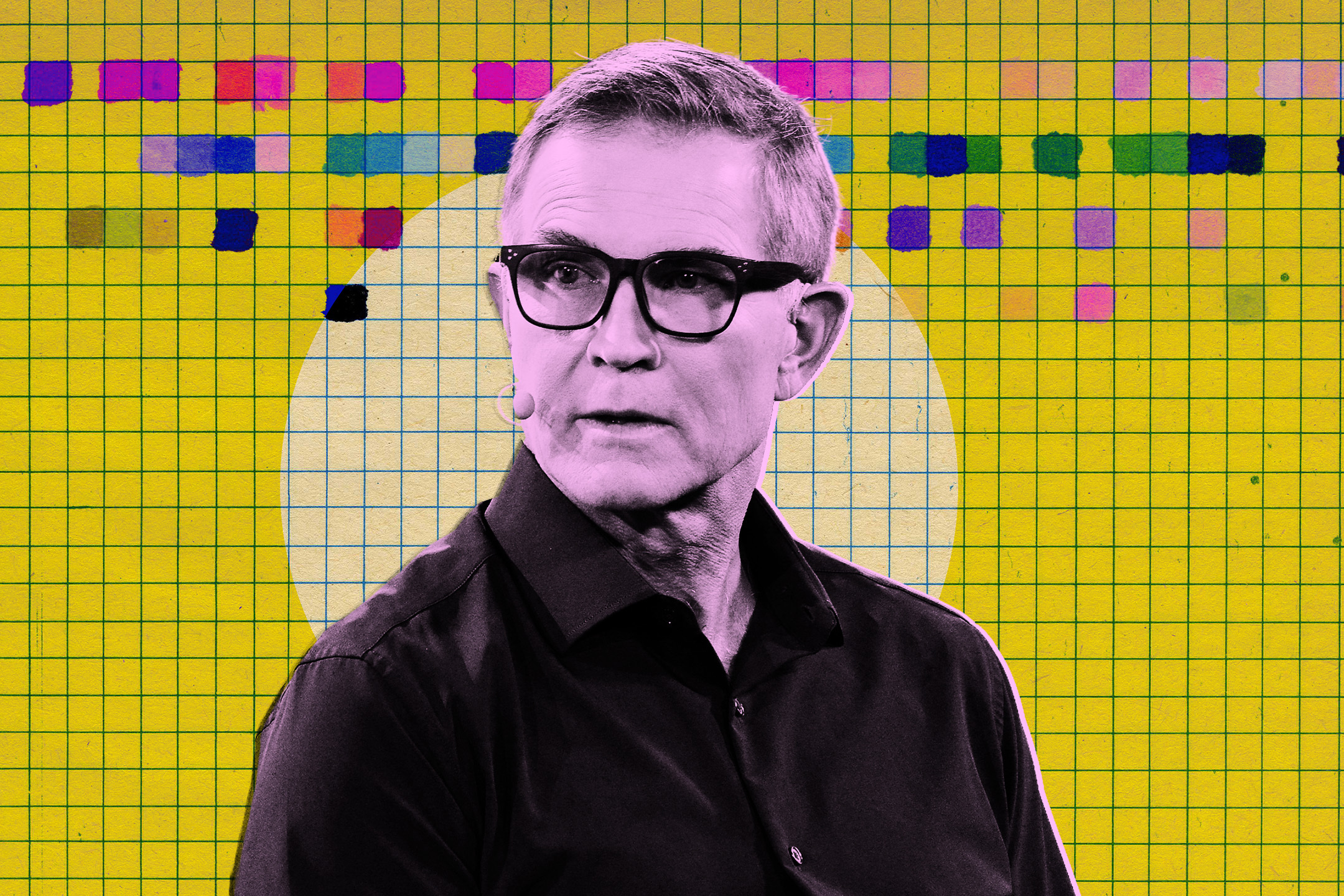

In response to growing criticism, Roblox has introduced a facial recognition system designed to group users of similar ages together. However, this solution has sparked new concerns. More recently, comments made by Roblox CEO and co-founder David Baszucki have drawn significant attention and controversy.

CEO of Roblox Says Child Predators Present Both Challenges and Opportunities

During a detailed interview on the New York Times’ “Hard Fork” podcast, Baszucki addressed the issue of child predators on Roblox in an unexpected way. Rather than minimizing the problem, he described it as not only a “problem” but also an “opportunity.” When asked by co-host Casey Newton about his perspective on child predators, Baszucki responded by framing the challenge as a chance to innovate.

He said, “How do we allow young people to build, communicate and hang out together? How do we build the future of communication at the same time?” Baszucki explained that Roblox has been working on these questions since its inception. He emphasized the scale of the platform, with 150 million daily active users spending 11 billion hours a month on Roblox, and highlighted the ongoing effort to find the best technological solutions to keep the platform safe and engaging.

Later in the conversation, when asked about the difficulties of managing user safety at such a vast scale, Baszucki again described the situation as a business opportunity. He pointed out that the gaming industry is worth $190 billion, and Roblox currently captures about three percent of that market. Baszucki suggested that this scale allows individual game creators to thrive within Roblox’s broader ecosystem, which includes built-in chat features and user moderation systems.

Safety Concerns and Moderation Challenges on Roblox

The podcast hosts pressed Baszucki on a 2024 report by short seller and activist firm Hindenburg Research, titled “Roblox: Inflated Key Metrics For Wall Street And A Pedophile Hellscape For Kids.” The report accused Roblox of cutting spending on user safety to boost growth figures for investors. Baszucki deflected these claims by noting that Hindenburg Research had ceased operations earlier this year.

He argued that Roblox is improving its safety measures, comparing the shift to adopting AI moderation tools to moving from manual labor to an assembly line in manufacturing. Roblox announced an AI moderation system over the summer, designed to detect early signs of child endangerment. However, many users have expressed frustration, claiming that the AI fails to stop harmful content effectively.

One user commented on the game MeepCity, stating it has experienced “extreme amounts of inappropriate content” for years and questioned whether AI moderation is capable of detecting such behavior or if harmful content is being allowed to persist.

It remains uncertain whether the new facial recognition technology will be sufficient to prevent sexual predators from exploiting Roblox. Given the platform’s history of ineffective safety measures and the CEO’s contentious remarks on the Hard Fork podcast, many remain skeptical about Roblox’s ability to fully address these serious issues.

For more stories on this topic, visit our category page.

Source: original article.